Fresh courtroom losses are putting Meta in an uncomfortable spotlight — not just for how it handled social media risks, but for what the rulings could mean for the future of AI safety.

Both cases centered on a sensitive question: what happens when a company’s own research shows its products may be causing harm? Internal documents reviewed in court suggested the tech giant had early signals about troubling experiences among younger users on Instagram, including exposure to unwanted interactions. Other findings pointed to possible mental health benefits when people spent less time on social platforms.

While Meta maintains that some research was taken out of context, the legal outcomes highlight a growing dilemma for the tech sector. Studying the real-world impact of digital products can improve safety, but those same insights can later be used in lawsuits.

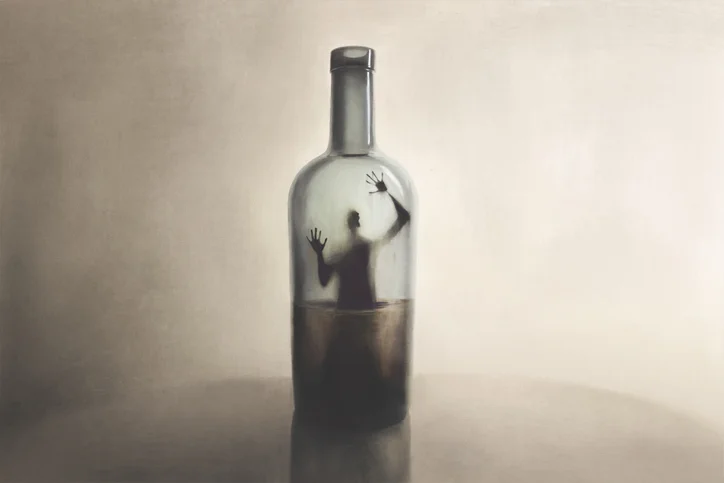

The debate isn’t limited to social media anymore. As companies like OpenAI and Google move quickly to release AI-powered tools, experts worry firms could become more cautious about funding research that reveals potential downsides.

The fallout from whistleblower Frances Haugen already pushed the industry to rethink how openly it studies user impact. Now, some fear history could repeat itself, but this time with AI.

Innovation may move fast, but without transparency, trust may struggle to keep up.

Related Readings: