By Shreyans Mehta

AI is cutting across user interfaces, skipping web and mobile layers and heading straight for APIs, potentially fracturing the app stack as we know it.

Much of the discussion around AI disruption has focused on its impact on search and user behaviour. Less attention has been given to how AI is fundamentally reshaping the underlying application stack. As AI agents increasingly bypass user interfaces and interact directly with APIs, they are creating new pathways through digital systems—raising critical questions about security, identity, and the future structure of enterprise architecture.

The disruptive influence of AI has largely centred upon how it will affect search engine traffic due to zero click searches or the need to clamp down on aggressive AI crawler bots that pillage sites for data. There’s been much less attention paid to how AI might effectively rewire the infrastructure itself.

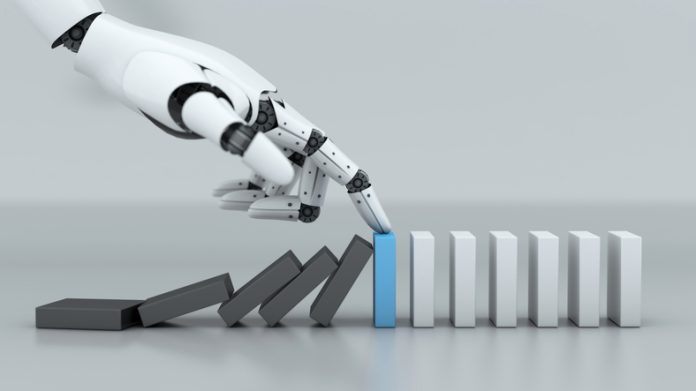

AI is creating ‘elephant pathways’, a term used to describe shortcuts created through repeated use, as it seeks to access data. But these pathways threaten to fracture the application stack i.e., the software tool and technologies that are used to build, run, and manage applications. AI agents are cutting across user interfaces, skip web and mobile layers as they head straight for Application Programming Interfaces (APIs). And it’s a problem that is set to worsen with the emergence of Agentic AI. While LLM agents have sought to access data in response to prompts, agentic AI will be even more invasive, seeking access to tools and services as it attempts to carry out multiple steps to fulfil a request autonomously.

Taking the path of least resistance has marked ramifications for the security controls that protect these systems and processes. They were built to govern human interactions and were adapted overtime, with new measures coming along to manage bot interactions. We now have incident detection and response (IDR) tools that look to determine if indicators of compromise are human or bot driven, for instance, while bot management solutions have sought to manage machine-to-machine interactions. They distinguish between ‘good’ versus ‘bad’ bots and even ‘grey’ bots that aren’t malicious but can be harmful to the business due to their intrusiveness or veracity.

Bots doing business

What nobody expected or planned for was the bot with a brain. These bots are going to want to conduct research, prioritise and organise, reserve goods and services, and carry out purchases, all without a human. As most apps will look to verify the interaction is with a human, that presents a problem. The payments industry is already looking to resolve this by requiring agents to prove their identities. Payment providers are assigning agents identifiers and digital wallets with funding options that will allow them to be verified, login, pay for, and consume goods and services over peer-to-peer connections.

If these payment verification processes are then combined with API protection, the ecosystem can ensure that malicious agents seeking to scrape, abuse, or defraud the site are blocked. Traffic can be analysed to look for instances of abnormal behaviour or intent so that, even if an agent goes rogue, it can be detected and stopped. This form of bot management happens at the edge, without the need to modify apps or bolt-on third party tools so that this form of security is much more agile.

However, while the payments industry is making inroads into planning for an agentic future, the same cannot be said for conventional businesses. If we again look to identity access and authorisation, organisations have spent months if not years implementing zero trust network access (ZTNA) to radically improve security through a ‘never trust, always verify’ mindset with continuous authentication and authorisation. Yet many are riding roughshod over these rules when rolling out AI.

ZTNA’s chief remit is to establish if an access request comes from a human with the appropriate level of clearance. For the same approach to be taken with AI agents, we will need to validate their authenticity and access permissions. Very few businesses are looking to join their AI projects with their ZTNA at this point, which is worrying. What they should be doing is looking at ways to ‘borrow’ the identity of the user making the prompt which is temporarily used by the agent to satisfy ZTNA requirements.

MCP as a mask

This is all well and good for standard network models but what happens when that AI agent interacts via an MCP server? Model Context Protocol (MCP) enables LLMs and agents to communicate with APIs without the need to resort to code. These servers act as a common means of communication and a conduit, just like APIs did for apps, enabling the AI to access information, tools, and services that would previously have taken months to build interfaces for. But they also effectively act as a proxy, hiding the true identity/intent of the prompter which means they could be used for nefarious purposes.

Anthropic’s revelation that a suspected nation state actor from China recently manipulated its Claude Code tool is a case in point. MCP servers were used to perform actions and gather data from scan, search, data retrieval and code analysis tools before convincing the AI to engage in attacks against 30 global organisations. Anthropic estimates 80-90% of the campaign was carried out by AI, with a human only taking part at 4-6 decision points during the attack, further demonstrating the potential for AI to minimise the human in the loop (HITL).

Anthropic claims the attack has enabled it to develop better classifiers to flag malicious activity, and the suggestion is that organisations will have to get better at detecting and investigating such attacks. Because while AI might be disregarding the existing architecture of the internet, the technology also holds the secret to how we can defend against such attacks.

Rolling out AI projects and standing up passive MCP servers or using third party MCP servers without validating their trustworthiness increases the exposure of the business. But there are steps that can be taken to help pave those elephant pathways and make them fit for purpose.

Putting in place an AI Gateway, for instance, can enable the organisation to create or connect to MCP servers by creating a trusted server registry. AI traffic flowing through those servers can be monitored to track user and agent behaviour as well as the applications being accessed and the API calls being made. And suspicious activity can then be flagged for investigation, so that the AI is not left to its own devices. This is important because, as shown with Claude Code, even trusted AI can be subverted.

What we must accept is that it’s in the nature of communications to find the quickest path and to expedite instructions as efficiently as possible. That means there will be casualties among the current systems and processes we use to get things done. Some form of tech stack collapse will be inevitable. But if we can plan for the demolition and put in place the foundations for these new ways of working, we can ensure security isn’t among the casualties.

Shreyans Mehta

Shreyans Mehta