Why AI Still Disappoints at the Organisational Level

By Joerg Niessing, Carsten Feldmann, and Michael Bücker

Agentic AI offers a step-change advance over its generative predecessor but, in order to maximise its advantages, you should understand the additional risks that it supposes, and how to mitigate them. You also need to know how to elicit the desired behaviour from it.

Artificial intelligence has advanced at breathtaking speed. In just a few years, organisations have gained access to tools that can write, analyse, design, and synthesise information at near-human levels. Many executives expected this new wave of generative AI to fundamentally transform productivity, decision quality, and decision speed across the enterprise.

In practice, however, the results often fall short of these expectations. Teams produce more content, more analyses, and more presentations than ever before, yet the outcomes that matter most – growth, conversion, quality, compliance, and trust – frequently remain unchanged. In some cases, they even deteriorate as complexity increases and coordination costs rise.

Research shows that only about 5 per cent of firms globally generate meaningful enterprise value from AI.

This gap between promise and performance points to a structural problem. A recent MIT study finds that although more than 80 per cent of organisations have experimented with generative AI, only around 5 per cent of pilots deliver measurable financial returns or are scaled into production workflows. The result is a widening “GenAI divide” between experimentation and value capture. Similarly, research by the Boston Consulting Group shows that only about 5 per cent of firms globally generate meaningful enterprise value from AI, despite growing investment and deployment.

The core issue is not model capability. It is that most AI initiatives are layered onto organisational designs shaped by earlier generations of automation.

From RPD/RPA to IDP – and Their Limits

Early automation efforts focused on Robotic Process Automation (RPA). These systems excel at executing predefined rules across stable, repetitive workflows. Their strength lies in determinism: given the same input, the same output reliably follows. This reliability, however, comes at a cost. Processes must be fully specified in advance, leaving little room for ambiguity, exceptions, or contextual judgment.

To extend automation into less structured domains, organisations adopted Intelligent Document Processing (IDP). By combining optical character recognition (OCR), machine learning, and classification models, IDP enabled the extraction of information from documents, emails, and forms. Yet once information was extracted, decision-making still relied on rule-based logic. Complexity was reduced rather than embraced.

As a result, both RPA and IDP optimised how work was executed, not how decisions were made. Automation remained confined to domains where logic could be exhaustively specified. Large parts of organisational work — judgment-heavy, context-dependent, and exception-rich — remained outside its reach.

Agentic AI: Automation Without Exhaustive Rules

Agentic AI shifts this boundary. For the first time, organisations can automate processes that were previously considered non-automatable, not because data was unavailable, but because specifying all relevant rules was infeasible. Agents can reason over context, interpret ambiguous inputs, select tools dynamically, and adapt their actions over time.

This represents a fundamental break from earlier automation paradigms. Instead of encoding decision logic explicitly, organisations increasingly rely on probabilistic reasoning performed by large language models (LLMs). Automation no longer depends on complete rulebooks.

However, this new capability introduces a new risk. Large language models are probabilistic systems. They can hallucinate, produce subtle errors, or follow plausible but incorrect reasoning paths. Their outputs are often fluent and persuasive, which makes mistakes difficult to detect. In high-stakes processes, where errors cannot be tolerated, this non-determinism becomes a critical challenge. Error rates must be empirically assessed, and mitigation strategies must be deliberately designed. This tension – unprecedented automation potential combined with probabilistic uncertainty – defines the organisational challenge of agentic AI.

From Autonomy to Elicitation: What Agentic AI Really Is

Generative AI is by now widely understood. LLMs respond to prompts by producing text, images, code, or analyses. Their logic is fundamentally reactive: an input triggers an output. They generate artefacts, but they do not participate in processes.

Agentic AI follows a different organising principle. Agents pursue objectives over time. They decompose goals into steps, interact with tools and systems, evaluate intermediate results, and adapt their behaviour based on feedback. Rather than responding to isolated prompts, they operate within ongoing workflows. Crucially, this does not require full autonomy, nor does it imply continuous human intervention. The defining feature of agentic systems is not how much freedom they have, but how their behaviour is shaped in advance.

This can be described as elicitation. Instead of correcting agents during execution, organisations design the decision space in which agents operate. Objectives, constraints, domain definitions, acceptable actions, quality criteria, and escalation thresholds are explicitly specified before the system is deployed.

Agents act within these designed boundaries. Autonomy, where it exists, is not absolute; it is elicited. It emerges from a structured environment rather than from the absence of control.

This perspective moves beyond the simplistic dichotomy of “fully autonomous” versus “human-in-the-loop.” Humans are not emergency brakes who intervene only when something goes wrong. They are designers of context, responsibility, and judgment.

Seen this way, the core distinction becomes clear: generative AI produces outputs; agentic AI participates in processes. It carries responsibility for progress toward an objective, even when final authority remains with humans. Agents must therefore be designed with roles, handovers, validation steps, and measurable success criteria – the same elements that define accountable work in any organisation.

Design Implications: How Agentic AI Creates Value

This reframing leads to three design implications. First, value shifts from task efficiency to process performance. Assistants improve individual productivity. Agents improve end-to-end outcomes such as conversion, compliance, or risk reduction. This requires redesigning processes around objectives rather than prompts.

Second, elicitation becomes a core organisational capability. The quality of agent behaviour depends less on model intelligence and more on how well goals, constraints, and evaluation criteria are elicited. Poor elicitation scales hallucinations. Good elicitation scales trust.

Third, governance must be built into execution rather than added afterward. Because agentic systems operate probabilistically, supervision cannot rely solely on ex-post review. Validation agents, confidence thresholds, escalation rules, and traceability must be integral parts of process design.

In this sense, LLMs are best understood not as standalone tools, but as cognitive engines embedded within deliberately designed socio-technical systems. Without such design, they merely accelerate existing inefficiencies. With it, they enable a new generation of automation that finally bridges the gap between adoption and value creation.

Context and Constraints in Regulated Environments

To understand why agentic AI so often disappoints – and where it can truly excel – it is useful to consider a “hard case.” Regulated environments such as payments, financial services, or critical infrastructure combine three constraints that are hostile to naive automation: strict privacy and transparency requirements, long and multi-stakeholder decision cycles, and a high need for internal alignment across business, legal, risk, and compliance functions.

In these settings, conventional GenAI pilots often fail for predictable reasons: outputs cannot be audited, claims cannot be reliably verified, and the path from generated content to measurable commercial outcomes is too complex to manage through isolated prompts. Privacy concerns further limit experimentation, as sensitive data cannot simply be sent to external systems without clear control and accountability.

Agentic approaches change this calculus. Because agents are embedded into deliberately designed processes, organisations can make explicit architectural choices about how and where models are used. In particularly sensitive contexts, this may include running language models in controlled environments or limiting external model access to narrowly defined, non-sensitive tasks. Privacy and data protection thus become design parameters rather than absolute barriers.

Paradoxically, these are exactly the environments where agentic AI can create disproportionate value – not by being more autonomous, but by being more structured. If agents can operate reliably under such constraints, the approach becomes transferable to many other industries. The key is to treat constraints as design inputs rather than obstacles to overcome.

Why Autonomy Alone Creates Risk, Not Value

Much of the current enthusiasm around agentic AI focuses on autonomy. Vendors showcase agents that can independently complete tasks, coordinate with other agents, or operate with minimal human intervention. In simple environments, this can work. In complex organisational settings, it often backfires.

Most enterprises operate under constraints that cannot be ignored. Regulatory requirements, brand standards, data protection rules, and accountability structures shape how decisions are made. Unbounded autonomy undermines trust and quickly triggers resistance from legal, compliance, and risk functions.

Organisations that succeed with agentic AI therefore do not aim for maximum autonomy. They design for bounded agency. Agents are empowered to act, but only within clearly defined roles, decision rights, and escalation paths. Human oversight is not an afterthought; it is part of the architecture.

This shift in mindset is essential. Agentic AI is not primarily a technology problem to be solved by better models. It is an organisational design challenge.

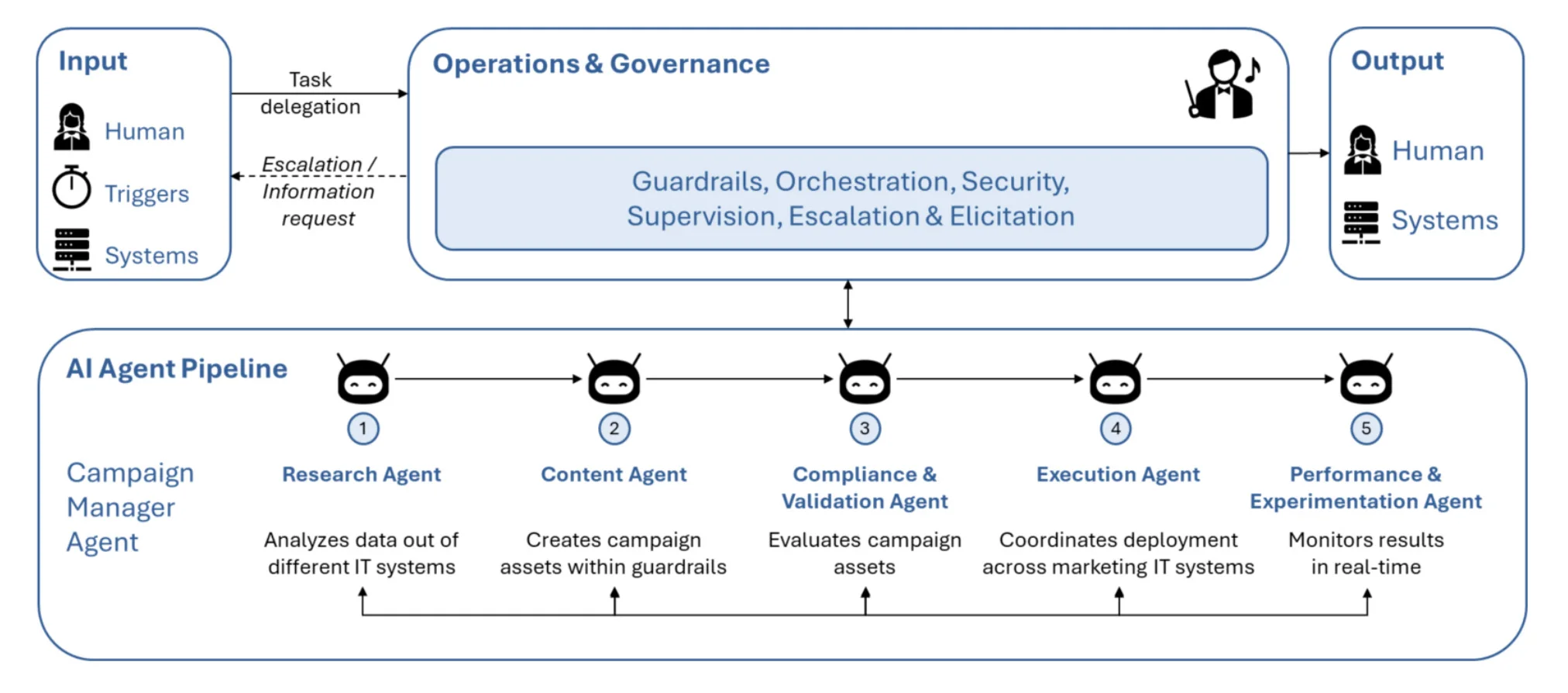

Example: The Campaign Manager Agent as an Agentic Pipeline

To make the abstract notion of agentic pipelines more tangible, consider the case of a Campaign Manager Agent in a regulated marketing environment (Source: “Faster. Smarter. Autonomous. Real examples of AI Agents in action”. White paper by UiPath, 2025). In many organisations, campaign management is a fragmented, labour-intensive process. Human teams move from ideation to content creation, data extraction, compliance review, execution, and performance analysis through a series of loosely connected tools. While generative AI can accelerate individual steps, such as drafting copy or analysing results, the end-to-end process remains slow, error-prone, and difficult to govern.

Figure 1: Campaign Manager Agent in Marketing as an Agentic Pipeline

In contrast, an AI-powered agent system streamlines the entire end-to-end campaign process, from ideation to execution and performance monitoring, enhancing marketing agility and efficiency (see Figure 1): The Campaign Manager Agent reframes this workflow as a coordinated sequence of specialised agent roles, each embedded within clear constraints and handover rules. The process begins with campaign ideation and setup. A (1) research-oriented agent analyses historical campaign data, customer segments, and performance benchmarks to propose campaign hypotheses. These are not free-form ideas, but structured proposals aligned with predefined objectives and KPIs. Next, a (2) content generation agent creates campaign assets – text, variants, or creatives – within explicit brand, tone, and regulatory guardrails. Outputs are generated against approved templates and claims libraries, reducing the risk of non-compliant messaging.

A (3) compliance and validation agent then evaluates these assets before execution. Rather than relying on post-hoc human review, this agent checks claims, disclosures, and language against policy rules and regulatory requirements. Only validated outputs move forward; exceptions are escalated to human reviewers. Once approved, an (4) execution agent coordinates deployment across marketing IT systems, triggering actions in tools such as CRM, ad platforms, or automation software. Importantly, this agent does not “decide” strategy; it executes within narrowly defined parameters. Finally, a (5) performance and experimentation agent monitors results in real time. It evaluates campaign performance against success criteria, proposes A/B test variants, and feeds structured insights back into the pipeline. These insights improve subsequent campaigns rather than remaining isolated analytics outputs.

Across the entire pipeline, every agent action is logged, traceable, and measurable. Human involvement is deliberate and targeted; approvals occur at predefined risk points, not as emergency interventions after problems emerge.

The value of this system does not stem from any single agent’s intelligence. It emerges from role clarity, structured handovers between orchestrated agents, and built-in governance. In practice, organisations deploying such agentic pipelines report not only reduced manual effort and faster execution, but also more consistent decision quality, fewer compliance issues, and greater managerial trust in AI-supported outcomes. In this UiPath case study, campaign preparation time was reduced from more than 20 hours to a small fraction. Content errors decreased sharply, minimising rework. The accelerated process enabled faster go-to-market and allowed teams to shift their focus to higher-impact decisions.

From Isolated Tools to Designed Pipelines

Based on our research, we propose a framework for embedding agentic AI into core organisational processes in a scalable and governable way. The framework does not prescribe specific technologies or models. Instead, it focuses on how work is structured, controlled, and distributed between humans, agents, and systems.

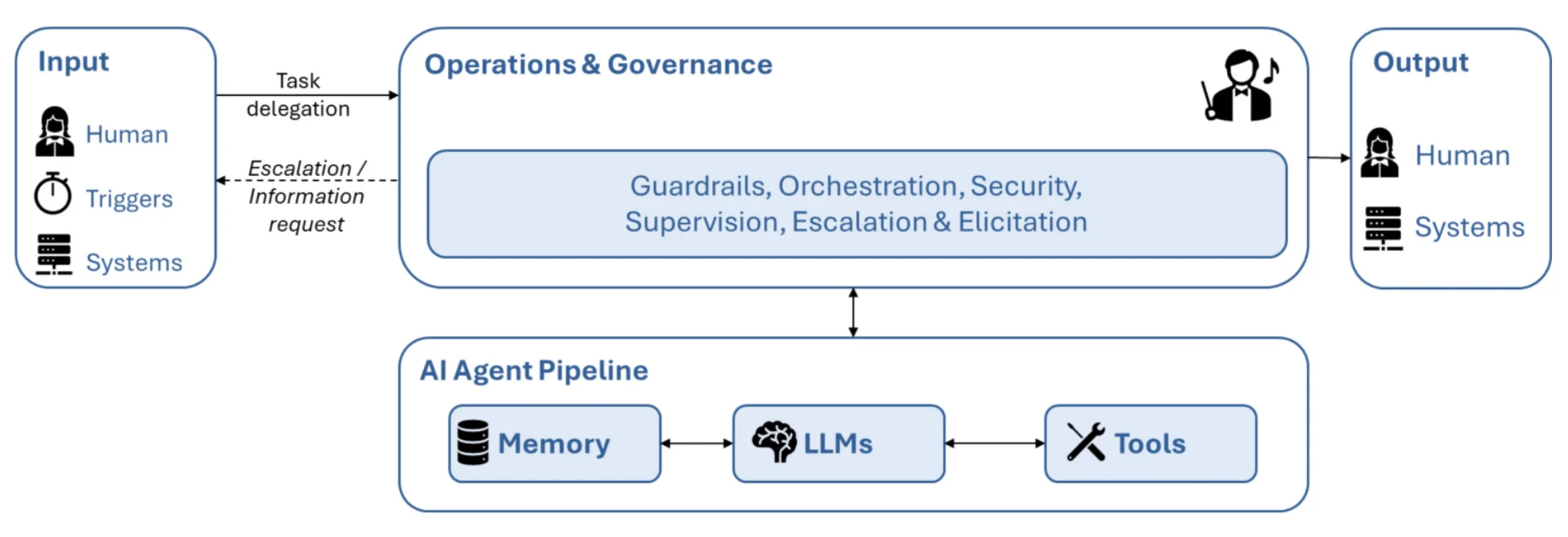

Figure 2: Agentic AI Framework – Elements & Interactions

At its core, the framework treats agentic AI as a process pipeline rather than a collection of isolated tools. Work enters the system through defined inputs, is executed by agents within a governed environment, and produces outputs that are either consumed by humans or enacted in business systems.

Inputs can originate from three sources: human task delegation, scheduled or event-based triggers, and upstream business IT systems. Importantly, these inputs do not directly activate autonomous behaviour. They are routed through an operations and governance layer that defines what agents are allowed to do, under which conditions, and with which constraints. This operations and governance layer forms the control plane of the system. It encompasses guardrails, orchestration logic, security controls, supervision mechanisms, and explicit escalation and elicitation rules. Rather than relying on ad hoc oversight, organisations specify in advance how decisions are made, which actions are permitted, and when execution must pause. This is where accountability is designed into the system.

A central function of this layer is runtime elicitation. When predefined thresholds related to uncertainty, risk, or missing information are exceeded, the system explicitly requests additional input. This may involve eliciting clarification or approval from a human decision-maker, or requesting information from connected business systems. Human involvement is therefore not continuous, but conditional and rule-based. Humans are not emergency brakes; they are engaged deliberately at moments where judgment or responsibility is required.

Beneath this control plane sits the agent execution layer (“AI Agent Pipeline”). Here, one or more AI agents perform the actual work by combining large language models with memory and tools. Agents reason over context, retrieve and store information, invoke tools, and generate intermediate or final results. Crucially, they do so within the boundaries defined by the governance layer. Their autonomy is bounded by design.

Effective systems rarely rely on a single, general-purpose agent. Instead, work is distributed across specialised agents with clearly defined roles and handovers. One agent may analyse inputs and structure a problem. Another may generate options or materials. A third may validate outputs against policies or constraints. Value does not arise from the intelligence of any single agent, but from the quality of their interaction. In high-performing designs, agents exchange structured outputs, assumptions, and confidence indicators rather than free-form text, creating a coherent pipeline rather than a sequence of disconnected actions.

Outputs of the system flow either to humans or to business IT systems. Outputs to humans may take the form of recommendations, decisions, or requests for approval. Outputs to systems may trigger updates, transactions, or downstream processes. Because all actions, handovers, and elicitation events are logged and traceable, the system is auditable by design.

The framework also makes clear that success cannot be measured through activity metrics alone. Speed and volume are easy to capture, but they say little about value. Agentic pipelines must be evaluated based on outcome quality, stability over time, reduction in errors, and the frequency and nature of human intervention. These performance indicators must be complemented by governance and risk metrics, such as policy violations, unverifiable claims, hallucinations, or audit exceptions.

Agentic AI creates value not by maximising autonomy, but by embedding probabilistic reasoning into accountable, end-to-end organisational processes.

In the case example discussed earlier, adopting this pipeline-oriented, governed approach led to tangible results. Decision quality improved, manual rework declined, and compliance issues became rarer. Just as importantly, managers regained confidence in the system because they could understand how conclusions were reached and where responsibility lay.

Taken together, the framework illustrates a central claim of this article: agentic AI creates value not by maximising autonomy, but by embedding probabilistic reasoning into accountable, end-to-end organisational processes.

What Makes Agentic Pipelines Different

At first glance, the framework described above may not appear revolutionary. Many organisations already use AI in multiple parts of their operations. The difference lies not in individual components, but in how they are integrated and governed.

Agentic pipelines are designed end-to-end. Agents are not deployed as clever add-ons, but embedded into processes that already matter to the business. Their outputs are structured so they can be consumed by other agents or humans without reinterpretation. Governance mechanisms are not bolted on after the fact, but actively shape execution throughout the process.

This integration changes how systems behave over time. Because agents share context, assumptions, and structured feedback within a governed pipeline, errors are less likely to be repeated blindly. Performance becomes measurable, learning becomes systematic, and improvement becomes manageable rather than accidental.

Hard Learnings: Why Many Agent Initiatives Stall

The most valuable lessons from early agentic deployments are often uncomfortable. Many initiatives fail not because agents are incapable, but because organisations underestimate the operating conditions required for scalable performance.

One common failure mode is poor data hygiene paired with high expectations. When underlying data is inconsistent, fragmented, or misaligned with real decision points, agents amplify noise and create the illusion of productivity while quietly degrading quality.

A second pitfall is undocumented or inconsistent processes. Agentic AI needs a process to inhabit. When workflows exist only as tacit knowledge, agents cannot be reliably embedded and escalation to humans becomes chaotic rather than deliberate. A third – and perhaps most frequent – issue is the absence of rigorous evaluation. Without outcome and risk metrics, teams default to proxy measures such as “time saved,” which can mask harmful errors or compliance exposure.

Finally, unclear ownership is a silent killer. When no one is accountable for the end-to-end pipeline, agents become everyone’s tool and no one’s system.

The remedies are practical, but they require leadership attention. Organisations need explicit policy catalogs and guardrails, well-defined handover and escalation points, and a shift from proxy KPIs to outcome KPIs. They also need named owners for the pipeline – not just for the model, the prompt library, or the vendor relationship. Scaling agentic AI is therefore less about deploying technology and more about establishing operational discipline.

From Concept to Execution: A Pragmatic Rollout

A common objection to agentic AI is that it sounds conceptually appealing but operationally complex. In practice, organisations can move faster than expected if they resist the temptation to over-engineer.

In our experience, a 3–4-month rollout is sufficient to move from concept to a functioning pilot, provided the focus remains on process design rather than technology selection. Critical steps include defining success metrics upfront, mapping the full workflow, assigning clear decision rights to agents, and specifying escalation and elicitation rules before deployment. This rollout typically unfolds in three phases. The discovery phase clarifies the process, identifies decision points, and defines success beyond productivity gains. The pilot phase embeds a small number of agents into a narrow, end-to-end workflow segment with strict human oversight and logging. The scale phase begins only once the system proves stable under governance, meaning that outcomes improve while risk indicators remain within acceptable bounds.

What slows organisations down is rarely technical capability. It is the absence of clarity about responsibility. Once this is resolved, implementation accelerates.

Do-Now-Summary: A 10-Point Checklist to Move from Pilot to Pipeline

Most organisations do not fail because they lack AI capability; they fail because they scale before they have designed the operating system around it. The following checklist captures the minimum set of decisions leaders need to make to move from experimentation to a governed, repeatable pipeline.

- Start with outcomes, not use cases. Define what “better” means in business terms (e.g., conversion, cost-to-serve, cycle time, error rate, compliance incidents), not just productivity gains.

- Map the end-to-end workflow. Identify where decisions are made, where handovers happen, and where errors or delays occur today.

- Choose one “thin slice” for the pilot. Select a narrow but end-to-end process segment where results can be measured quickly and governance can be stress-tested.

- Define agent roles and decision rights. Specify what each agent may do, what it may not do, and what triggers escalation to a human.

- Design the human-in-the-loop checkpoints. Decide where judgment is required (e.g., approvals, exceptions, high-risk outputs) and make those checkpoints explicit.

- Curate the knowledge base and guardrails. Ensure that agents work from approved, versioned sources (policies, claims, playbooks, templates) rather than unconstrained internet-style generation.

- Separate sensitive data wherever possible. Put clear controls around PII and confidential data; design agents to use abstractions or aggregated signals when feasible.

- Build in transparency and auditability. Log inputs, outputs, actions, and handovers so decisions can be traced, explained, and reviewed.

- Measure both performance and risk. Track outcome metrics alongside quality and governance indicators (e.g., unverifiable claims, policy violations, hallucinations, audit exceptions, human intervention rates).

- Scale only when the system is stable under governance. Expand scope and autonomy gradually, based on evidence that outcomes improve and risk remains controlled.

In short: winning with agentic AI is less about deploying smarter models and more about designing accountable pipelines that can be trusted at scale.

Why This Matters Across the Enterprise

Although many early applications of agentic AI emerge in customer-facing functions, the underlying principles apply far more broadly. Any organisational area characterised by repetitive decision-making, multiple handovers, and high requirements for quality or compliance can benefit from agentic pipelines.

The strategic shift is subtle but profound. Leaders stop asking how AI can make individual employees more productive and start asking how work itself should be redistributed between humans and machines. This reframes AI from a productivity tool into a lever for organisational redesign.

Conclusion: Accountability Over Autonomy

Agentic AI is often framed as a story of autonomy. In reality, its true power lies in accountability. The organisations that succeed will not be those that give machines the most freedom, but those that design the clearest roles, constraints, and measures of success.

Agentic AI does not replace human judgment. It amplifies it by taking responsibility for execution where rules are clear and escalation paths are defined. When embedded into well-designed pipelines, it enables organisations to move beyond experimentation toward durable advantage.

The real transformation, then, is not technological. It is organisational. Those who recognise this will move decisively from pilots to pipelines – and from AI hype to sustained impact.