A Manager’s Introduction to Human-Centered Artificial Intelligence

By Mark Esposito, Terence Tse, Aurelie Jean and Josh Entsminger

As AI advances, there is a need for better frameworks to understand how to create value from changing human-AI relationships. This article offers a practical guide for managers and executives looking to leverage human-centered approaches to AI.

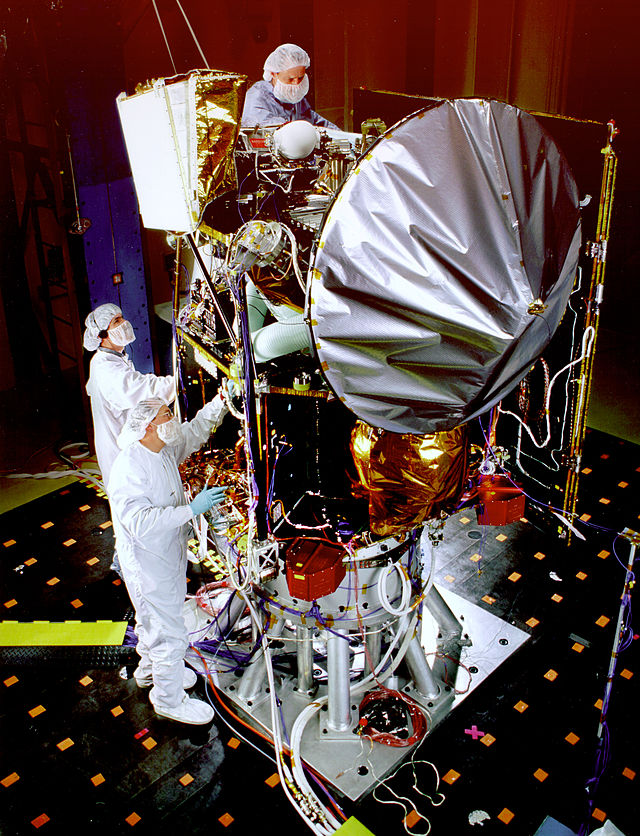

I think the problem was that our systems designed to recognise, and correct human error failed us.”1 This was the explanation given in 1999 by Carl Pilcher, science director for solar system exploration at NASA’s Jet Propulsion Laboratory, when a failed measurement conversion led to the 125 million Mars Climate Orbiter slamming into the Martian surface. This problem was succeeded 14 years later when a calculation error in the design of a new Spanish submarine2 led to a fatal flaw, such that the submarine could submerge but could not resurface – an error causing a 2.2 billion dollar investment to be delayed by years. Each of these errors was a simple issue of calculation and translation, ultimately a matter of mistaken decimal points. So, what if a system could automatically catch all such errors?

Naturally, the suspicion has already been investigated, with novel artificial intelligence (AI) systems being deployed across sectors to reduce rates of human error. Across such projects a common theme has emerge – AI systems may be good at correcting for problems identified, but they are not very good at independently identifying what counts as a problem that needs to be corrected outside of what was explicitly identified as a problem, yet. The problem of using AI goes further – as its not simply correcting for the mistake but alerting that a mistake was needed to be corrected.

This identification problem has been joined by a host of like issues creating difficulties for firms trying to build, buy, deploy, and change artificial intelligence solutions. Fundamental to all these problems is a lack of common principles and guidelines shaping how organisation understand the value of AI and what people want from it. As the adoption and development of AI progresses, the latter of points of what value AI should provide is occupying a larger space in public attention, as AI often brings out automation anxiety over the possibility of the replacement of people’s competitive advantage across tasks and jobs rather than their use to accentuate a person’s advantages – whether to help humans catch errors, or to replace human where they produce errors.

As AI advances, manager and executives need better frameworks to understand how to create value from changing human-AI relationships. Human-Centered AI is emerging as just such a framework to help firms orient themselves under rapidly changing technology, to better balance ethical principles and successful use, and to better identify what counts as an ethical and successful use of AI at all.

WHY AI IS NEVER JUST AI

To understand how to leverage human-centered AI, managers first need to understand changes facing AI management. This begins with three fundamental points:

First, that the AI technology itself is far more than the algorithm; rather, AI is the umbrella term for a class of systems integrating talent capable of creating and augmenting AI solutions, the AI algorithm designs themselves, valuable data sets and data management strategies, data capturing devices, and computational power to train and run the solutions. Each of these elements has their own development path, and the unique combinations of each is what generates a competitive solution.

Second, that successful AI projects are less about the tech than the institutions and practices to which they are connected. This can be split between the organisation deploying the solution and the expected end users. On the side of the organisation comes the need for buy-in from existing staff, training on how to use the solution, and an effective feedback design; on the side of the user comes a host of additional dimensions relating to the user-experience and interface design of any given system.

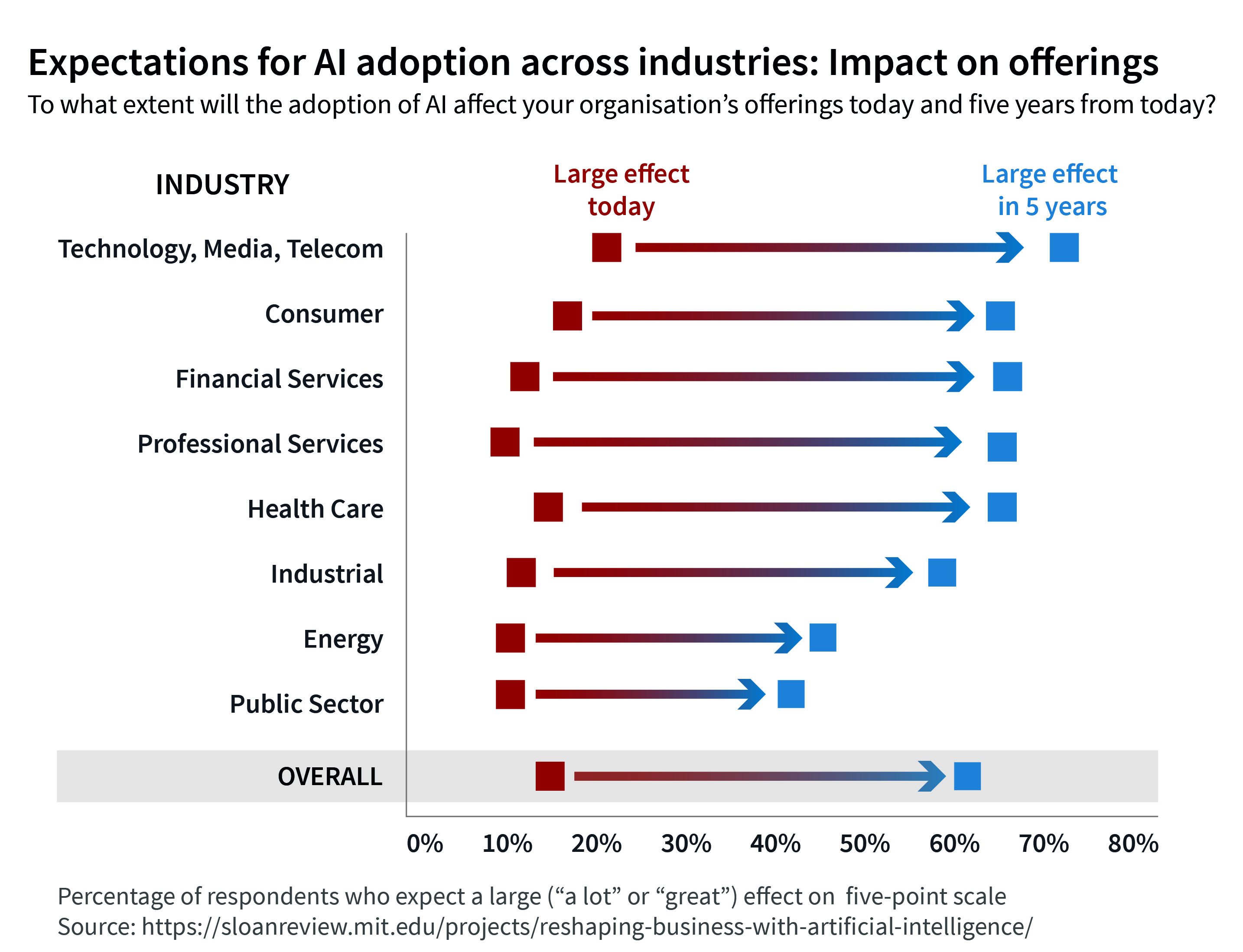

Third, that AI systems evolve, and so do the institutions and practices once AI is used. So, the question for managers is not the next 6 months, but the next 5 years of expected evolutions across the elements and institutions, to ‘look around the corner’ at what’s next in value creation. A lack of clear understanding of unique development paths not only for the firm but the larger market for the institutions and elements of a successful AI system can lead to delayed development, unsuccessful implementation, and reduced effectiveness at scale.

AI is never just AI. Yet, within each are inherent warnings for firms looking to leverage AI to build advantages – as the attempt to acquire a solution without the requisite talent to manage and implement it can lead to failure. Each should also cement that as a system, individual elements can advance at different rates – a firm can acquire data sets without the talent, new algorithms can emerge without appropriate data to be trained on, new data capture systems can advance without acquiring appropriate data management.

But these backend levels have considerable influence on how people understand their relationship with AI and the opportunities afforded. AI is a device for augmenting cognition, it reshapes what a person needs to think about for a task. Consider the calculator as a service for storing and organising mathematical information, its function is to replace the need for human to mentally perform any such calculation for themselves and defer it to the technology.

[ms-protect-content id=”9932″]

UNDERSTANDING HUMAN CENTERED ARTIFICIAL INTELLIGENCE

So with the context of the AI ecosystem, let’s go back to the Mars lander briefly. The basic problem identified was a lack of oversight into unit measurement, coming from the multi-national collaboration that went into the program. But the question is not simply oversight, it’s how a problem would be identified for the engineers and overall staff. When transferring designs, should there have been an automated checklist of identified problems which identified inconsistencies in projections?

The question quickly seems to become about the design of appropriate user-experiences informing them on potential issues in the calculation, but the issue extends far beyond any given user-experience to the context of how design and management staff understand the problems and needs of any such users. It’s not just about identifying that the unit measurement is an issue, but the best way of informing individuals at each step of the design process to be aware that inconsistencies might be an issue.

Humans are inconsistent, idiosyncratic, intuitive – developing their own means of making sense of the world and effective AI solutions need to take that into account in order to be desirable and maximise their usability. While the nuances here will be explored as long as AI is applied in business, it brings out the question of the larger context, namely: what role AI should play in any given human-AI interaction, in any given organisation, in any given job. What do we as humans want AI to do?

Therein lies the fundamental point of human-centered AI – to design and leverage artificial intelligence for enhancing human capabilities in an effective, intelligible, and ethical manner. The first and last consideration is what human institution, behaviour, or practice the AI is intended to help shape, what it is intended to alleviate, and, by shaping or alleviating, what it will serve to do in the larger social sense. This identification is necessarily vague in so far as the approach needs to help organise and align a variety of perspectives and definitions concerning what counts as enhanced capabilities, effective solutions, intelligible solutions, and ethical solutions. Human-centered exceeds any consideration of user-experience.

ENSURING ETHICALLY ALIGNED AI

So now the organisation has made a decision that the AI is desirable, they have understood the context for whether the use of AI will enhance or inhibit human capabilities, and now they need to further understand the manner of enhancing those capabilities in the nuances of AI system creation and usage.

This means ensuring that the AI being designed or bought is aligned with the ethical principles of the firm and stakeholders.

Fairness

AI solutions are often designed as aides to existing decision-making processes. AI for recruitment often serves either to reduce the time burden of reviewing initial resumes to sort for the top 5, or to assess additional data-points during an interview that might otherwise go missed by a recruiter. The question is then whether this process helps to unburden recruiters and candidates of biases for the process to be more meritocratic, or whether the process affirms prior biases, assumptions, stereotypes, or conventions.

The question of fairness is precisely the question of how an organisation understands and manages its potential to discriminate, whether intended or unintended, across all decision-making contexts. AI only works with the material given, and much prior data reflects past discriminatory and biased beliefs and behaviours, again whether intended or not. Hiring data for Amazon reflected a male dominated tech sector, thus discriminate against female hires when deployed as an AI resume assessment system.

Intelligibility and Transparency

However, to understand if a solution is fair, or effective, such solutions demand means of deciphering the AI outputs. This problem tends to go by different names – explainable AI (XAI), transparent AI, and or intelligibility.

This problem arises from the black box problem – while inputs are understood, the outputs often cannot be understood in relation to the reasoning or rules an AI developed to produce those outputs; we can see that a neural network was trained on picture of cats and dogs to identify each, but we cannot understand the specific weighting and rules it created to generate such identification patterns.

Privacy

AI generates rules from specifications – these specifications can be engineered by humans, as in the case of AlphaGo or, as in almost all cases, they are derived from data. The higher quality the data, the better the outputs can be expected to perform; this, importantly, does not mean more data in every case. In many cases the data and means of collecting it are pursued without the consent of those impacted by the data. The most prominent case is the training and use of modern facial recognition systems.

Autonomy

All AI solutions make a claim on the problems of a firm and the benefits of using human labour by being created as a mechanism which can enables human skills, replace them, or serve as a hybrid of the two. Designing a chat-bot to take over redundant questions for consumer information rather than taking over customer service overall; designing an AI system that predicts and aids humans with writing rather than writing the article. These are extreme dichotomies which are unfortunately still reflected in the market. An autonomy-conscious design team will work to understand the nuances of a given task, decisions make relative to that task, and what kinds of cognitive labour is redundant and constraining that can be automated so as to enable a human to excel.

WHO IS THE HUMAN IN HUMAN-CENTERED?

Even if only understood superficially, human-centricity should serve as an essential design reminder to consider the needs and problems of real people in real contexts, to understand how AI will impact how people actually behave rather than how a company wants them to behave. The approach with the greater probability of success is the nuanced approach, which understands human centricity in terms not only of the wide chain of potential end users but the systems in which they make decisions.

So, similar to AI is not just about AI, human-centered AI is not just about the nuances of individual experiences and needs; rather, organisation should consider a systemic approach within the context of understanding how those needs emerge, change, and are susceptible to change and manipulation.

The argument here can be put directly – AI can be fully expected to shape how people’s needs are expressed, understood, and changed. As such, organisations have a demand and a responsibility to understand not only how to address those problems but how any methods might create unintended consequences in turn.

In short, the best human centered design understands the system into which humans are placed, where they make decisions, and how the decisions can impact them downstream.

WHAT MANAGERS AND EXECUTIVES SHOULD CONSIDER?

This article was written with the intention of helping guide how managers and executives navigate the wide range of potential developments in human-AI relations. To that end, we can synthesise a set of general recommendations for managers and executives looking to generate or leverage human-centered approaches to AI. In particular, across two key decision spaces, on what to do when building or what to do when buying.

Managers can follow a general investigation, in three orienting concerns:

1. What is the problem or need to which AI will be applied and what is the desired outcome?

2. What kinds of decision are implied by the problem and who will be making

those decisions?

3. What are the environments3 in which those decisions will be made and how can that decision making environment change?

Within these three is the necessary empathic context for understanding the larger perspective of generating human-centered design in the context of AI. Addressing these concerns will aid in understanding both the context of human decision makers, the problem they are facing, and whether AI is the appropriate solution, or aid to a given solution, in the context of humans and the problem.

For Building and Buying Human-Centered AI

A company which has internal staff capable of generating machine learning solutions often has a fundamental advantage over other firms – as solutions are not only difficult to develop, but often require considerable maintenance. However, most firms lack the internal capabilities to develop novel and efficient solutions. As such, most firms tend to use externally sourced off-the-shelf solutions, developed with a mind towards optimising labour costs. The following are some ideas worth exploring, as managers attempt the integration of the symbio-intelligence that the human centered AI can foster:

1. Ensure cognitive diversity in design and implementation teams to avoid blind spots of experience, considerations, and understanding of human experience.

2. Ensure the management and external firm staff have a common understanding of the problem, needs, and value the AI can supply.

3. Avoid introducing ‘artificial stupidity’ into your business by clarifying more precisely what AI is supposed to do, and how people will interact with it in the context of existing processes.

4. Establish clear processes for ‘changeability management’ to control and frame any updates from new training and data to the AI system.

5. Establish clear processes for assessing mental models across user chain.

Conclusive Thoughts

The road ahead is impervious and not easy to decipher. There are challenges that can only be understood as certain milestones will be achieved and new ethical and societal falls, brought down by the art of good practice and inspired leadership. But still, the amount of collective thinking ahead will become a required competitive necessity to acquire and fulfill, if we want the next decade, the one that will be marked in history as the period where humans understood that the real power of this technology is not for the sake of technology, but for the improvement of social constructs and paradigms, currently so needy of a true reset button.

[/ms-protect-content]

About the Authors

Mark Esposito, Ph.D is Co-founder of Nexus FrontierTech, a leading global firm providing AI solutions to a variety of clients across industries, sectors, and regions. In 2016 he was listed on the Radar of Thinkers50, as of the 30 most prominent business thinkers on the rise, globally. Mark has worked as Professor of Business & Economics at Hult International Business School and at Thunderbird Global School of Management at Arizona State University and has been on the faculty of Harvard University since 2011. Mark is the co-author of the bestsellers “Understanding How the Future Unfolds: Using DRIVE to Harness the Power of Today’s Megatrends” and “The AI Republic” which received global accolade.

Mark Esposito, Ph.D is Co-founder of Nexus FrontierTech, a leading global firm providing AI solutions to a variety of clients across industries, sectors, and regions. In 2016 he was listed on the Radar of Thinkers50, as of the 30 most prominent business thinkers on the rise, globally. Mark has worked as Professor of Business & Economics at Hult International Business School and at Thunderbird Global School of Management at Arizona State University and has been on the faculty of Harvard University since 2011. Mark is the co-author of the bestsellers “Understanding How the Future Unfolds: Using DRIVE to Harness the Power of Today’s Megatrends” and “The AI Republic” which received global accolade.

Terence Tse, Ph.D is a co-founder and Executive Director of Nexus FrontierTech, a London-based tech company specialising in the development and integration of AI solutions that help organisations save time, money and resources by tacking process inefficiencies and data waste. He is also a Professor at the London campus of ESCP Europe Business School. Terence’s most recent book is “The AI Republic: Building the Nexus Between Humans and Intelligent Automation”. He has written more than 110 articles and regularly provides commentaries on the latest current affairs including technology-driven transformations, future of work/education, artificial intelligence and blockchain in many outlets and act as speakers on these subjects around the world.

Terence Tse, Ph.D is a co-founder and Executive Director of Nexus FrontierTech, a London-based tech company specialising in the development and integration of AI solutions that help organisations save time, money and resources by tacking process inefficiencies and data waste. He is also a Professor at the London campus of ESCP Europe Business School. Terence’s most recent book is “The AI Republic: Building the Nexus Between Humans and Intelligent Automation”. He has written more than 110 articles and regularly provides commentaries on the latest current affairs including technology-driven transformations, future of work/education, artificial intelligence and blockchain in many outlets and act as speakers on these subjects around the world.

Aurelie Jean, Ph.D has been working for more than 10 years in computational sciences, applied to engineering, medicine, education, finance, and journalism. Aurélie worked at MIT and Bloomberg. Today, Aurélie works and lives between the USA and France to run In Silico Veritas, an agency in analytics and computer simulations. Aurélie is an advisor at the BCG and Altermind, a mentor at the FDL at NASA, and an external collaborator for The Ministry of Education of France. Aurélie is also a science editorial contributor for Le Point and Elle International, teaches Algorithms in Universities, and conducts research on predictive algorithms.

Aurelie Jean, Ph.D has been working for more than 10 years in computational sciences, applied to engineering, medicine, education, finance, and journalism. Aurélie worked at MIT and Bloomberg. Today, Aurélie works and lives between the USA and France to run In Silico Veritas, an agency in analytics and computer simulations. Aurélie is an advisor at the BCG and Altermind, a mentor at the FDL at NASA, and an external collaborator for The Ministry of Education of France. Aurélie is also a science editorial contributor for Le Point and Elle International, teaches Algorithms in Universities, and conducts research on predictive algorithms.

Josh Entsminger is an applied researcher in technology and politics. He currently serves as a fellow at the PublicTech lab, senior fellow at Ecole des Ponts Business School, and fellow at Nexus FrontierTech. He is currently a doctoral candidate in public sector AI at UCL’s Institute for Innovation and Public Purpose.

Josh Entsminger is an applied researcher in technology and politics. He currently serves as a fellow at the PublicTech lab, senior fellow at Ecole des Ponts Business School, and fellow at Nexus FrontierTech. He is currently a doctoral candidate in public sector AI at UCL’s Institute for Innovation and Public Purpose.

References

1. https://www.latimes.com/archives/la-xpm-1999-oct-01-mn-17288-story.html

2. https://o.canada.com/news/spain-builds-submarine-70-tons-too-heavy

3. https://www.wired.com/brandlab/2018/05/ai-needs-human-centered-design

/https://www.wired.com/brandlab/2018/05/ai-needs-human-centered-design/