Artificial Intelligence has been fascinating us for a few years with its explosive evolution. But how does it work? And when did it appear?

Created in our image

It all started in our mythology, actually. Millennia ago inhabitants of ancient Mesopotamia composed hundreds of beautiful legends, one of which stated that the goddess Mami created humans only to “lighten the toiling of gods” — shaped from clay, newborn humanity was destined to grow barley, date palms and brew wheat beer to satiate the heavenly rulers.

Then the Hebrew folklore invented Golem — an anthropomorphic being that would execute orders written on a piece of paper and put in its mouth (sounds like coding a command). Golem, again, would be used for hard and tedious labor, just like humans were exploited by the Sumerian anunnaki before that.

And finally the Ultimate Worker myth culminated in the medieval era surrounding the name of Albert the Great. According to a story, he put together a marvelous automaton named “Android”, which was used to spook Albert’s best student Thomas Aquinas who couldn’t stand automaton’s theological reasoning and eventually destroyed the “blasphemous mannequin”.

It seems, people always wanted a miraculous assistant to ease their lives — neural networks seem to be fit for that role. They are used for biometric spoofing, restoring antique footage, diagnosing really bad diseases, and even writing pickup lines. But it was a long way to go.

Sweet cherry AI

It was 1943. Nazis are yet unaware about their upcoming fiasco in Stalingrad. Italy was about to surrender to the allies. And Glenn Miller’s band was the chart-topping musical act of the world.

Amidst all that historical chaos another epoch-making event was occurring. The research of the duo of Warren McCulloch and Walter Pitts combined their knowledge on neuropsychology, logic and math to embody their concept of connectionism.

It was a handful of electric circuits connected in a fashion to emulate thinking, a computational model of a neuron, which consisted of two major components: g and f.

Component g emulated mechanics of a brain dendrite — a protoplasmic extension of a nerve cell that receives signals and transmits them further to the cell body. While component f is responsible for making a final decision similar to our frontal lobe.

McCulloch and Pits suggested a revolutionary idea: the enigma of human reasoning and decision-making could be explained with a simple binary decision of choosing between a True or False response. It’s just that we have 86 billion of them, so our logic can perform quite complex operations!

Then, our logic employs the so-called Boolean operators — these are three simple words Not, And, Or. (Plus And Not.) They set the groundwork for decision-making by flipping through numerous variables that accompany a certain situation.

Imagine being a prehistoric person. You’re hungry, and your gaze falls upon a napping megatherium — a giant ground sloth. You have to decide either to attack your potential prey or leave it be. Your brain will do a quick calculation considering the variables:

- I’m hungry.

- I’m alone and unarmed.

- The sloth is too big or too heavy.

- The operation is dangerous and not simple.

Eventually your troglodyte prudence would kick in triggered by this logical chain and save your life.

Mark my Mark I

In 1949 a book written by Donald Hebb, The Organization of Behaviour, saw the light. Its central idea was that neural pathways become stronger and more robust after each usage. This mechanism, for example, helps us memorize new stuff and learn new skills as we repeat a certain action over and over.

All these avant-garde ideas from the 1940s caught the attention of Mark Rosenblatt who focused on exploring human cognition. Although he had to start with flies — he was deeply intrigued with a decision mechanism, which makes a tiny insect flee from danger.

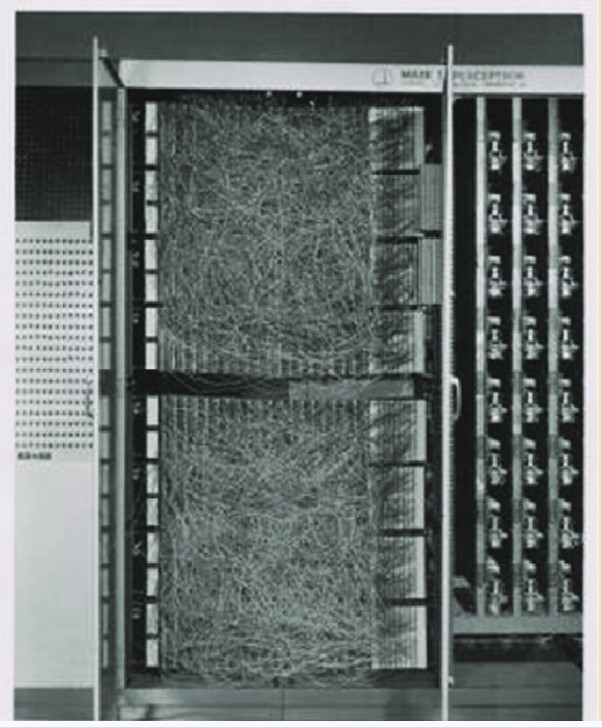

His musings culminated in the Mark I Perceptron — the first physical manifestation of artificial intelligence. This behemoth-like contraption was an attempt to recreate human vision and a brain algorithm that could recognize pictures.

Mark had a camera equipped with cadmium sulfide photocells, which could snap 400-pixel photographs. Its software part, quite primitive, was largely based on the McCulloch-Pitts neuron, and was limited to the separation of linearly separable classes only. Everything non-linear could probably cause a short circuit in Mark I’s brain.

Slow rise to fame

Mark I was to machine learning what Gutenberg’s press was to the world. Its architecture is basically used in today’s neural networks, only multilayered. And they take care of millions of tasks: deepfake detection, autonomous car piloting, stock price prediction, and even saving kidnapped children with facial recognition.

Shortly after its debut ADALINE and MADALINE were developed — they officially were the first neural network architecture. Their purpose was to remove analogue hiss produced in telephony circuits. Fun fact: this technology is still used today.

But then, AI evolution was a bit stalled. In 1969 a folio titled “Perceptrons” by M. Minsky and S. Papert made Rosenblatt’s single-perception model collide with the concept of multi-layered neural networks. In simple terms, there wasn’t enough computational power at the time. And it led to machine learning almost losing all of its funding.

But the 1980s will become a true renaissance for artificial intelligence. Older ideas like backpropagation — initially introduced in 1969 — were unearthed from the piles of study papers. New architectures were designed. New complementary metal-oxide semiconductors had their debut providing computational power. And of course, a bleak glimpse of commercial potential began breaking through.

In the early 90s artificial intelligence will land a few gigs — facial recognition will be one of them. And by the end of the decade it will encounter the first serious threats of spoofing and fraud that will culminate in 2017 when the first deepfake will crawl out of Reddit’s darkest gutters. Who knows where that odyssey will lead to next.